Building Next-Generation Data Foundation for Enterprise Intelligence

Enterprise data architecture has evolved dramatically from traditional data warehouses to modern data lakes, and increasingly to hybrid lakehouse architectures that combine the best of both paradigms. DS STREAM delivers comprehensive data lake and data warehouse solutions that provide the scalable, performant, and cost-effective data foundation necessary for advanced analytics, machine learning, and data-driven decision-making. With 150+ data engineering specialists and over a decade of implementation expertise across diverse industries, we architect and implement data storage solutions that transform raw data into strategic business assets.

Today's enterprises generate data from hundreds or thousands of sources in structured, semi-structured, and unstructured formats at unprecedented volumes and velocities. Traditional data warehouses, while excellent for structured relational analytics, struggle with the variety and volume demands of modern data ecosystems. Data lakes provide the flexibility and scalability required but often become ungoverned "data swamps" without proper architecture and data management practices. DS STREAM implements modern data architecture patterns that deliver both flexibility and governance, enabling organizations to harness all their data while maintaining quality, security, and performance.

Strategic Importance of Modern Data Architecture

Data lakes and data warehouses represent foundational infrastructure investments that enable or constrain an organization's analytical capabilities for years to come. Organizations with well-architected data platforms achieve demonstrable competitive advantages: faster time-to-insight enabling rapid response to market conditions, comprehensive data accessibility supporting self-service analytics across business units, reduced data duplication and storage costs through centralized repositories, and unified data governance ensuring compliance and data quality.

DS STREAM's approach recognizes that data architecture decisions must align with broader business strategy, technology roadmaps, and organizational capabilities. We work closely with C-level executives and technology leadership to understand current limitations, future requirements, and organizational readiness for modern data architectures. Our solutions balance technical excellence with practical implementation considerations, ensuring solutions are not only architecturally sound but also operationally sustainable and aligned with available resources and expertise.

Modern Data Lake Architecture and Design Principles

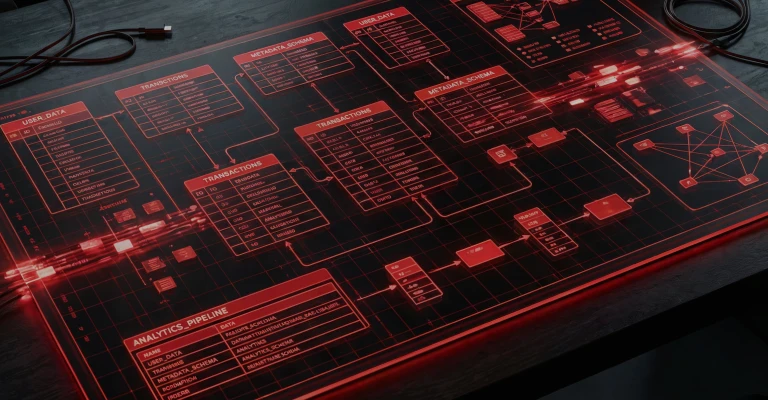

DS STREAM architects data lakes based on proven design principles that prevent the common pitfall of data lakes degrading into unmanageable data swamps. Our data lake implementations incorporate comprehensive metadata management, strong data governance frameworks, automated data quality controls, and efficient data organization strategies that ensure data remains discoverable, understandable, and valuable throughout its lifecycle.

Modern data lake architecture encompasses multiple zones optimizing for different use cases and data maturity levels:

Raw Zone (Landing): Immutable storage of source data in native formats with comprehensive lineage tracking, ensuring complete audit trail and ability to reprocess from original sources

Cleansed Zone (Bronze): Data with basic quality validation, standardized formats, and deduplication while preserving comprehensive history for compliance and reprocessing requirements

Curated Zone (Silver): Business-logic-transformed data with consistent schemas, enriched with reference data, optimized for analytical query patterns and downstream consumption

Consumption Zone (Gold): Highly aggregated, business-ready datasets optimized for specific analytical use cases, dashboards, and reporting with pre-calculated metrics and denormalized structures

Sandbox Zone: Isolated experimentation environments for data scientists and analysts with governed access to production data for model development and exploratory analysis

Archive Zone: Cost-optimized long-term storage for historical data meeting retention requirements while minimizing storage costs through lifecycle policies

Our data lake implementations leverage cloud object storage including Google Cloud Storage, Azure Data Lake Storage Gen2, and Amazon S3, optimized with intelligent tiering, lifecycle policies, and access patterns. We implement open table formats including Apache Iceberg, Delta Lake, and Apache Hudi providing ACID transactions, schema evolution, time travel capabilities, and efficient incremental processing that transform data lakes from simple storage repositories into sophisticated analytical platforms.

Enterprise Data Warehouse Design and Optimization

DS STREAM delivers modern cloud data warehouse solutions leveraging Google BigQuery, Azure Synapse Analytics, Snowflake, and Amazon Redshift architectures optimized for analytical workloads, complex queries, and business intelligence applications. Our warehouse designs balance normalization for data integrity with denormalization for query performance, implementing dimensional modeling, star schemas, and snowflake schemas based on specific analytical requirements and query patterns.

Data warehouse architecture encompasses critical design decisions including partitioning strategies for query pruning and performance optimization, clustering approaches for related data co-location, materialized views for frequently accessed aggregations, and incremental refresh strategies minimizing computational overhead. DS STREAM implements comprehensive workload management ensuring analytical queries don't impact operational systems, resource allocation strategies preventing query interference, and cost controls preventing runaway query expenses.

Modern cloud data warehouses provide separation of storage and compute enabling independent scaling, but effective utilization requires careful architecture. We implement multi-cluster warehouses for concurrent workload isolation, auto-scaling policies responding to query demand, result caching strategies reducing redundant computation, and query optimization techniques including statistics management, distribution keys, and sort keys that dramatically improve performance while reducing costs.

Lakehouse Solutions: Unifying Data Lakes and Data Warehouses

The lakehouse architecture represents the convergence of data lake flexibility with data warehouse performance and governance. DS STREAM implements lakehouse solutions using Databricks Delta Lake, Apache Iceberg, and native cloud lakehouse capabilities that enable organizations to consolidate data architecture, reduce data duplication, and simplify operational complexity while maintaining the flexibility to support diverse analytical workloads from SQL analytics to machine learning.

Lakehouse architectures provide transformative capabilities including ACID transactions on data lakes ensuring consistency for concurrent read/write operations, time travel enabling historical queries and reproducible analysis, schema enforcement preventing data quality issues, and unified batch and streaming processing simplifying architecture. DS STREAM's lakehouse implementations enable direct SQL queries against data lake storage, eliminating ETL overhead from lakes to warehouses while providing warehouse-class query performance through optimizations including data clustering, Z-ordering, and dynamic file pruning.

Our lakehouse solutions provide significant advantages: reduced total cost of ownership by eliminating duplicate storage and complex ETL between lakes and warehouses, simplified data architecture reducing operational complexity and failure points, enhanced data freshness with minimal latency between raw ingestion and analytical availability, and unified governance with consistent security and access control across all data. Organizations implementing lakehouse architectures typically achieve 40-60% cost reduction compared to maintaining separate lake and warehouse infrastructures.

Strategic Data Organization and Schema Design

Effective data organization separates high-performing data platforms from those that degrade over time into unusable complexity. DS STREAM implements comprehensive data organization strategies encompassing logical data modeling, physical storage optimization, namespace management, and access control structures that ensure data remains discoverable, performant, and secure as it scales from gigabytes to petabytes.

Our data organization approach includes establishing enterprise data models aligning with business domains and organizational structure, implementing consistent naming conventions ensuring intuitive data discovery, defining clear data ownership and stewardship responsibilities, and creating logical data hierarchies reflecting business relationships. Physical organization strategies include intelligent partitioning schemes supporting efficient query pruning, file sizing optimization balancing query performance with storage efficiency, and format selection considering compression, query patterns, and ecosystem compatibility.

Schema design represents critical architecture decisions with long-term implications. DS STREAM implements schema evolution strategies supporting backward and forward compatibility, versioning approaches enabling controlled schema changes without breaking downstream consumers, and migration patterns for seamless transition between schema versions. We balance schema-on-write approaches ensuring data quality at ingestion with schema-on-read flexibility enabling exploratory analysis, selecting optimal strategies based on data characteristics and consumption patterns.

Comprehensive Metadata Management and Data Cataloging

Metadata represents the critical element that transforms data repositories from opaque storage into usable analytical assets. DS STREAM implements comprehensive metadata management encompassing technical metadata describing schemas, formats, and locations; business metadata providing context, definitions, and ownership; operational metadata tracking lineage, quality, and usage; and administrative metadata managing access control and compliance requirements.

Our metadata management solutions leverage enterprise data catalogs including Google Cloud Data Catalog, Azure Purview, AWS Glue Data Catalog, and specialized platforms like Alation and Collibra providing unified metadata repositories, automated metadata harvesting from diverse sources, business glossary integration linking technical and business terminology, and comprehensive data lineage tracking from source systems through transformations to consumption.

Effective metadata management enables critical capabilities including intuitive data discovery through semantic search across technical and business metadata, impact analysis understanding downstream effects of schema or pipeline changes, compliance reporting demonstrating data handling for regulatory requirements, and usage analytics identifying valuable datasets and optimization opportunities. DS STREAM's metadata management implementations include automated metadata extraction reducing manual overhead, quality scoring highlighting trusted datasets, and recommendation engines suggesting relevant datasets based on usage patterns and similarities.

Query Optimization and Performance Engineering

Query performance directly impacts analyst productivity, dashboard responsiveness, and infrastructure costs. DS STREAM implements comprehensive query optimization strategies transforming slow, expensive queries into highly performant operations delivering results in seconds rather than minutes or hours while dramatically reducing computational costs.

Our query optimization methodology encompasses workload analysis identifying frequent query patterns and performance bottlenecks, physical design optimization including partitioning, clustering, and indexing strategies aligned with query patterns, materialized view implementation pre-computing expensive aggregations and joins, and query rewriting transforming inefficient SQL into optimized equivalent queries. We implement automated query monitoring identifying slow queries, analyzing execution plans, and recommending optimization opportunities.

Performance engineering extends beyond individual query optimization to holistic system performance including workload management preventing resource contention, result caching eliminating redundant computation, incremental refresh strategies minimizing data reprocessing, and storage optimization through compression, file formats, and lifecycle management. DS STREAM's optimization engagements typically achieve 10-50x query performance improvements while reducing infrastructure costs 30-60% through efficient resource utilization and workload optimization.

Industry-Specific Data Lake and Warehouse Solutions

DS STREAM delivers specialized data lake and warehouse solutions across FMCG, retail, e-commerce, healthcare, and telecommunications industries, each with unique requirements, regulatory constraints, and analytical use cases that inform architecture decisions.

FMCG and Retail Analytics

FMCG and retail organizations require data architectures supporting high-volume transactional data, customer behavior analytics, supply chain optimization, and demand forecasting. We implement data warehouses with rapidly updating dimensions supporting real-time inventory views, data lakes integrating point-of-sale, e-commerce, supply chain, and customer data, and analytics platforms enabling merchandising optimization, promotional effectiveness analysis, and customer segmentation.

Healthcare and Life Sciences

Healthcare data architectures must balance comprehensive data integration with stringent security and compliance requirements including HIPAA, GDPR, and data sovereignty regulations. DS STREAM implements secure data lakes for clinical data, genomic data, medical imaging, and research data with comprehensive encryption, access controls, and audit logging. Our healthcare warehouse designs support population health analytics, clinical decision support, and operational efficiency while ensuring patient privacy and regulatory compliance.

Telecommunications

Telecommunications data architectures process massive volumes of network telemetry, customer usage data, and IoT device information requiring extreme scalability and optimized query performance. We implement petabyte-scale data lakes with efficient partitioning supporting network analytics, customer behavior analysis, and predictive maintenance. Our warehouse designs optimize for complex analytical queries across billions of records enabling customer churn prediction, network optimization, and revenue assurance.

The DS STREAM Advantage in Data Lake and Warehouse Solutions

Choosing DS STREAM for data lake and warehouse initiatives provides organizations with comprehensive expertise, proven methodologies, and commitment to delivering business outcomes.

Team Depth: 150+ data engineers specializing in modern data architecture, cloud platforms, and advanced analytics

Proven Track Record: Over 10 years successfully implementing data lakes and warehouses for enterprise clients globally

Platform Expertise: Deep technical knowledge across Google BigQuery, Azure Synapse, Snowflake, Databricks, AWS, and emerging lakehouse technologies

Technology Agnostic: Recommendations driven by your specific requirements, existing investments, and strategic direction rather than vendor preferences

Strategic Partnerships: Certified partnerships with Google Cloud, Microsoft Azure, and Databricks providing early access to capabilities and architectural guidance

Industry Specialization: Domain expertise in FMCG, retail, e-commerce, healthcare, and telecommunications ensuring solutions address industry-specific requirements

Holistic Approach: Integration of data architecture with pipeline engineering, data governance, analytics, and machine learning for comprehensive solutions

Migration Excellence: Proven methodologies for legacy warehouse migration to cloud platforms with minimal business disruption