Enabling Real-Time Intelligence with Streaming Data Architecture

Real-time and streaming data processing has evolved from specialized niche capability to essential enterprise infrastructure as organizations demand immediate insights, rapid response to business events, and sub-second data availability for operational systems and customer experiences. DS STREAM delivers comprehensive streaming data solutions enabling organizations to process millions of events per second, detect patterns and anomalies in real-time, and activate insights instantaneously across customer engagement, fraud detection, operational monitoring, and predictive analytics use cases. With 150+ data engineering experts and over 10 years of proven expertise, we architect and implement streaming platforms that transform enterprises from reactive, batch-oriented operations to proactive, event-driven organizations.

Modern enterprises generate continuous streams of data from web and mobile applications, IoT devices, network infrastructure, transaction systems, and social media platforms. Extracting value from these data streams requires fundamentally different architectural approaches than traditional batch processing, demanding distributed processing frameworks, fault-tolerant message delivery, stateful computations, and low-latency data paths. DS STREAM's technology-agnostic approach leverages industry-leading streaming platforms including Apache Kafka, cloud-native services across Google Cloud Pub/Sub, Azure Event Hubs, and AWS Kinesis, combined with processing frameworks like Apache Flink, Apache Spark Streaming, and Kafka Streams, to deliver streaming solutions that provide millisecond latencies while maintaining exceptional reliability and scalability.

Business Value of Real-Time Data Processing

Real-time data processing fundamentally transforms how organizations operate, compete, and engage with customers. Organizations implementing streaming architectures experience transformative benefits including immediate detection and response to business-critical events, enhanced customer experiences through personalization at interaction time, fraud prevention identifying suspicious patterns before transactions complete, operational efficiency through real-time monitoring and predictive maintenance, and competitive differentiation by acting on opportunities before competitors.

DS STREAM has enabled organizations across industries to achieve remarkable outcomes: retail clients reducing inventory stockouts 40% through real-time demand sensing, financial services organizations preventing fraud saving millions annually through sub-second transaction analysis, telecommunications providers improving network reliability 60% through real-time telemetry analysis and automated remediation, and healthcare organizations improving patient outcomes through real-time clinical decision support. Real-time capabilities are no longer optional competitive advantages but essential infrastructure for digital-first organizations.

Modern Stream Processing Architecture Patterns

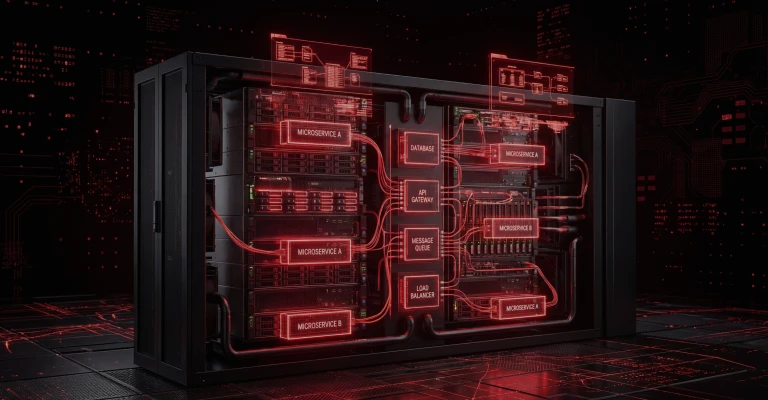

DS STREAM architects streaming platforms based on proven design patterns addressing the unique challenges of continuous data processing including fault tolerance, exactly-once semantics, stateful computations, and late-arriving data. Our streaming architectures incorporate distributed message brokers providing durable, scalable event transport; stream processing engines executing transformations, aggregations, and enrichments; state management enabling sophisticated windowing and sessionization; and low-latency data stores supporting real-time queries and dashboards.

Core architectural components of our streaming solutions include:

Event Ingestion Layer: High-throughput data collection from diverse sources including application events, database change streams, IoT telemetry, and third-party APIs with protocol translation, validation, and routing

Message Broker Infrastructure: Distributed, fault-tolerant event streaming platforms (Kafka, Pulsar, cloud-native services) providing durable storage, replay capabilities, and scalable distribution to multiple consumers

Stream Processing Layer: Distributed computation engines processing events in motion with complex transformations, stateful aggregations, windowing operations, and pattern detection executing with millisecond latencies

State Management: Distributed state stores enabling sophisticated computations including sessionization, user profiling, and complex event processing with fault tolerance and recovery

Sink Layer: Low-latency integration with operational databases, data warehouses, analytics platforms, alerting systems, and downstream applications enabling real-time activation

Monitoring and Observability: Comprehensive real-time monitoring of throughput, latency, error rates, backpressure, and business metrics with automated alerting and visualization

Our architectures address critical streaming challenges including exactly-once processing semantics preventing duplicate processing, fault tolerance with automatic recovery from failures, backpressure management preventing system overload, and late data handling maintaining correctness despite out-of-order arrivals. We implement lambda architectures combining batch and streaming for specific requirements, kappa architectures using streaming for all processing, or hybrid approaches optimizing for specific characteristics.

Apache Kafka: Enterprise Event Streaming Platform

Apache Kafka has emerged as the de facto standard for enterprise event streaming, providing high-throughput, fault-tolerant, and scalable message broker capabilities that form the backbone of modern streaming architectures. DS STREAM possesses deep Kafka expertise spanning architecture design, cluster operations, performance tuning, security hardening, and ecosystem integration accumulated through hundreds of production implementations across diverse industries and scales.

Our Kafka implementations incorporate best practices for topic design and partitioning strategies balancing parallelism with ordering guarantees, replication factor configuration ensuring durability and availability, retention policies optimizing storage costs while maintaining replay requirements, and consumer group management enabling scalable, fault-tolerant consumption. We implement Kafka Connect for integration with databases, cloud storage, and SaaS applications; Kafka Streams for lightweight stream processing; and Schema Registry for schema management and evolution ensuring producer-consumer compatibility.

DS STREAM implements comprehensive Kafka operations including cluster sizing and capacity planning based on throughput and retention requirements, monitoring and alerting using tools like Confluent Control Center, Prometheus, and custom dashboards, performance optimization addressing producer/consumer configuration and broker tuning, security implementation including authentication, authorization, and encryption, and disaster recovery with multi-datacenter replication and failover procedures. We deliver both self-managed Kafka deployments on cloud infrastructure and managed service implementations using Confluent Cloud, Amazon MSK, or Azure Event Hubs for Kafka.

Real-Time Analytics and Continuous Intelligence

Real-time analytics enables organizations to make decisions based on current conditions rather than historical patterns, fundamentally changing business responsiveness. DS STREAM implements continuous analytics solutions providing streaming aggregations, anomaly detection, pattern recognition, and predictive insights with sub-second latency enabling immediate action on emerging opportunities or threats.

Streaming analytics use cases we implement include real-time dashboard and KPI monitoring showing current business metrics updated continuously, operational intelligence providing live visibility into system health and performance, customer behavior analytics tracking user journeys and enabling real-time personalization, fraud detection analyzing transactions and identifying suspicious patterns instantly, and predictive maintenance processing sensor telemetry to predict equipment failures before occurrence. These capabilities transform organizations from reactive postures to proactive stance, addressing issues before customer impact or capitalizing on fleeting opportunities.

DS STREAM leverages diverse real-time analytics technologies including stream SQL enabling familiar query syntax on continuous data streams, time-series databases optimized for timestamp-ordered metrics, in-memory data grids providing microsecond query latencies, and real-time OLAP systems combining batch and streaming for comprehensive analytics. We implement visualization layers with auto-refreshing dashboards, alerting systems with intelligent thresholds and anomaly detection, and activation mechanisms triggering automated responses or human workflows based on analytical insights.

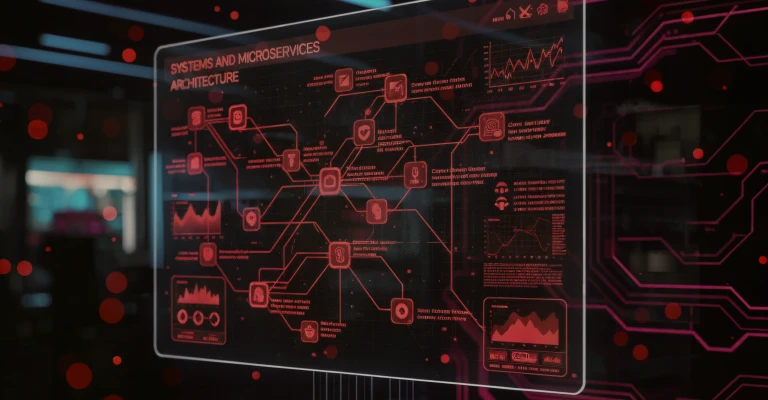

Event-Driven Systems and Microservices Architecture

Event-driven architecture represents a fundamental paradigm shift from request-response systems to loosely coupled, asynchronous communication patterns. DS STREAM designs and implements event-driven systems enabling microservices to communicate through events rather than direct calls, dramatically improving scalability, resilience, and organizational agility by decoupling service dependencies and enabling independent evolution.

Event-driven architecture benefits include improved scalability with asynchronous processing removing bottlenecks, enhanced resilience through loose coupling where failures don't cascade, better auditability with complete event logs providing comprehensive audit trails, simplified integration enabling new consumers without producer changes, and temporal decoupling allowing consumers to process events at their own pace. DS STREAM implements event-driven patterns including event notification for loose integration, event-carried state transfer reducing query overhead, event sourcing as system of record, and CQRS separating read and write models.

Our event-driven implementations address critical challenges including event schema design balancing completeness with payload size, event versioning enabling schema evolution without breaking consumers, event ordering ensuring causal consistency when required, and idempotency handling duplicate event delivery. We implement comprehensive event governance including event catalogs documenting available events and schemas, ownership and SLA definitions, and deprecation processes ensuring smooth evolution. Event-driven architecture requires cultural and organizational changes beyond technical implementation; DS STREAM provides comprehensive guidance on organizational patterns, team structures, and operational practices necessary for event-driven success.

Ultra-Low-Latency Data Pipeline Engineering

Latency-sensitive applications demand specialized pipeline engineering optimizing every component for minimal delay. DS STREAM designs and implements ultra-low-latency pipelines achieving end-to-end latencies measured in milliseconds for use cases including algorithmic trading, real-time fraud detection, interactive gaming, and autonomous systems where delays of hundreds of milliseconds represent unacceptable performance.

Low-latency optimization techniques we implement include minimizing serialization overhead using efficient binary formats like Avro or Protocol Buffers, reducing network hops through co-location and edge processing, leveraging in-memory processing eliminating disk I/O latency, implementing streaming joins and aggregations avoiding database roundtrips, and utilizing specialized hardware including NVMe storage and RDMA networking. We conduct comprehensive latency profiling identifying bottlenecks across producer, broker, network, processor, and consumer, systematically optimizing each component.

DS STREAM implements latency SLAs with comprehensive monitoring tracking p50, p95, and p99 latencies, automated alerting for latency threshold violations, and performance testing validating latency characteristics under various load conditions. We balance latency optimization with other requirements including throughput, fault tolerance, and cost, ensuring solutions meet latency requirements without unnecessary complexity or expense. Typical low-latency implementations achieve end-to-end latencies under 100 milliseconds at 95th percentile while processing millions of events per second.

IoT Data Processing and Edge Computing Integration

Internet of Things deployments generate unprecedented data volumes from sensors, devices, and embedded systems requiring specialized streaming architectures addressing massive scale, intermittent connectivity, limited device capabilities, and edge processing requirements. DS STREAM designs and implements IoT data platforms processing telemetry from millions of devices, providing real-time analytics, and enabling closed-loop control systems for industrial, smart city, connected vehicle, and consumer IoT applications.

IoT streaming challenges we address include massive scale with millions or billions of devices generating continuous telemetry, heterogeneous devices with varying capabilities and protocols, intermittent connectivity requiring store-and-forward capabilities, data volume management through intelligent sampling and edge aggregation, and security ensuring device authentication and encrypted communication. DS STREAM implements comprehensive IoT ingestion supporting protocols including MQTT, CoAP, HTTP, and proprietary protocols, with protocol translation, device management, and firmware update capabilities.

Edge computing represents critical architecture pattern for IoT deployments, processing data near generation points to reduce latency, bandwidth consumption, and cloud costs. DS STREAM implements edge processing architectures deploying lightweight stream processing at edge nodes performing filtering, aggregation, and anomaly detection locally, transmitting only meaningful data or detected patterns to cloud platforms for comprehensive analytics and storage. Our edge-to-cloud architectures enable sophisticated use cases including predictive maintenance, autonomous vehicle systems, and industrial control requiring millisecond response times combined with comprehensive cloud analytics for optimization and monitoring.

Stream Processing Frameworks and Technology Selection

DS STREAM maintains deep expertise across diverse stream processing frameworks, each with specific strengths, characteristics, and optimal use cases. Framework selection significantly impacts performance, operational complexity, and development productivity, requiring careful evaluation aligned with requirements, team capabilities, and ecosystem considerations.

Apache Flink

Apache Flink provides sophisticated stream processing with true event-time processing, exactly-once semantics, sophisticated windowing, and high throughput. Flink excels for complex event processing, stateful computations, and applications requiring strict correctness guarantees. DS STREAM implements Flink for sophisticated streaming analytics, pattern detection, and mission-critical applications where exactly-once processing is essential.

Apache Spark Streaming

Apache Spark Structured Streaming provides unified batch and streaming processing with familiar DataFrame/Dataset APIs enabling code reuse across batch and streaming contexts. Spark excels for organizations with existing Spark investments, complex transformations leveraging Spark's rich transformation library, and lambda architectures requiring batch-streaming consistency. DS STREAM implements Spark Streaming for organizations prioritizing developer productivity and unified processing paradigms.

Kafka Streams

Kafka Streams provides lightweight stream processing as Java library without separate cluster infrastructure, simplifying operations and deployment. Kafka Streams excels for Kafka-centric architectures, microservices-based processing, and applications requiring operational simplicity. DS STREAM implements Kafka Streams for event-driven microservices, lightweight transformations, and organizations prioritizing operational simplicity.

Industry-Specific Real-Time and Streaming Solutions

DS STREAM delivers specialized streaming solutions across FMCG, retail, e-commerce, healthcare, and telecommunications industries, each with unique real-time requirements and use cases.

Retail and E-Commerce

Retail streaming platforms process clickstream events, inventory updates, transaction streams, and customer interactions enabling real-time personalization, dynamic pricing, fraud detection, and inventory optimization. We implement sub-second recommendation engines, real-time inventory visibility across channels, and fraud detection analyzing transactions in flight before completion. Our retail streaming solutions process millions of events per second during peak shopping periods while maintaining consistent low latency.

Healthcare and Life Sciences

Healthcare streaming applications process patient monitoring data, medical device telemetry, and clinical system events enabling real-time clinical decision support, patient deterioration detection, and operational efficiency. DS STREAM implements HIPAA-compliant streaming platforms with comprehensive encryption, audit logging, and access controls supporting use cases including ICU patient monitoring, early warning systems, and real-time capacity management while ensuring patient privacy and regulatory compliance.

Telecommunications

Telecommunications streaming platforms process network telemetry, call detail records, and customer usage events at massive scale enabling real-time network optimization, fraud detection, and customer analytics. We implement platforms processing billions of events daily with sophisticated anomaly detection, pattern recognition, and automated remediation supporting network reliability, revenue assurance, and customer experience optimization.

The DS STREAM Advantage in Real-Time and Streaming Solutions

Specialized Expertise: 150+ data engineers with deep streaming architecture and processing framework expertise

Proven Experience: Over 10 years delivering mission-critical real-time and streaming solutions across industries

Technology Breadth: Comprehensive expertise across Apache Kafka, Flink, Spark Streaming, Kafka Streams, and cloud-native streaming services

Platform Agnostic: Technology recommendations driven by your requirements rather than vendor relationships

Cloud Expertise: Deep knowledge of Google Cloud, Microsoft Azure, and AWS streaming services and best practices

Industry Specialization: Domain expertise in FMCG, retail, e-commerce, healthcare, and telecommunications streaming use cases

End-to-End Solutions: Comprehensive services from architecture through implementation, optimization, and managed operations

Performance Focus: Proven methodologies achieving millisecond latencies while processing millions of events per second