Transforming Data Integration with Modern ETL/ELT Engineering

ETL (Extract, Transform, Load) and ELT (Extract, Load, Transform) processes form the critical backbone of enterprise data ecosystems, moving and transforming data from diverse sources into actionable analytics and operational systems. DS STREAM delivers comprehensive ETL/ELT development services that enable organizations to integrate disparate data sources, implement complex business logic transformations, ensure data quality, and deliver reliable, performant data flows supporting mission-critical business operations. With 150+ specialized data engineers and over 10 years of proven expertise, we architect and implement data integration solutions that transform fragmented data landscapes into unified, accessible, and valuable data assets.

Modern enterprises operate with hundreds or thousands of data sources including transactional databases, SaaS applications, IoT devices, social media feeds, and third-party data providers. Integrating these diverse sources while maintaining data quality, managing schema changes, and ensuring reliable delivery requires sophisticated engineering practices and deep technical expertise. DS STREAM's technology-agnostic approach leverages best-in-class integration tools and frameworks across cloud platforms including Google Cloud, Microsoft Azure, and AWS, combined with modern orchestration capabilities using Apache Airflow, to deliver ETL/ELT solutions that scale from megabytes to petabytes while maintaining exceptional reliability and performance.

ETL vs ELT: Choosing the Right Integration Pattern

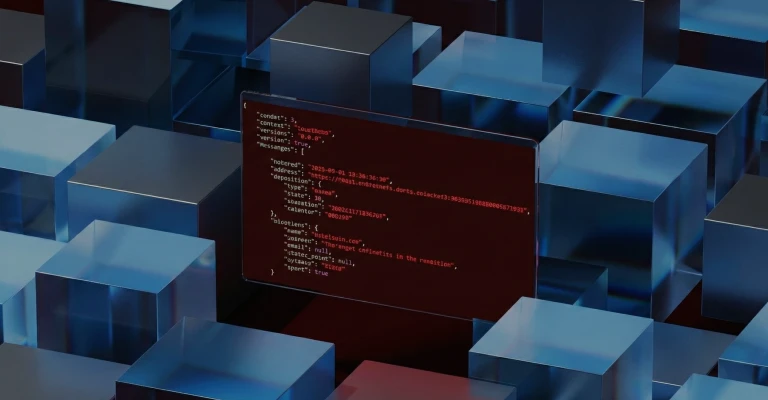

Understanding when to implement ETL versus ELT architectures represents a critical design decision with significant implications for performance, scalability, and operational complexity. Traditional ETL extracts data from sources, performs transformations in intermediate processing layers, and loads transformed data into target systems. This pattern works well when target systems have limited processing capabilities, transformations are computationally intensive, or data must be cleansed before reaching target environments.

ELT inverts this paradigm by extracting data from sources, loading raw data into target systems (typically cloud data warehouses or lakes), and performing transformations using the target system's processing capabilities. ELT leverages the massive parallel processing power of modern cloud data platforms, reduces data movement overhead, and enables more flexible transformation logic that can evolve without reprocessing source extractions.

DS STREAM evaluates ETL versus ELT approaches based on specific requirements including source system capabilities and constraints, target platform processing power and cost structure, data volume and velocity characteristics, transformation complexity and computational requirements, data governance and security policies, and organizational skills and tooling preferences. Many modern implementations employ hybrid approaches: ELT for high-volume data with complex transformations, ETL for sensitive data requiring transformation before landing, and real-time streaming for latency-sensitive use cases.

Comprehensive Data Extraction Strategies

Effective data extraction represents the foundation of reliable data integration. DS STREAM implements sophisticated extraction strategies tailored to source system capabilities, data characteristics, and business requirements. Our extraction approaches minimize source system impact, ensure data consistency, and provide mechanisms for recovering from failures without data loss or duplication.

Extraction patterns we implement include:

Full Extraction: Complete dataset extraction suitable for small datasets, initial loads, or sources without change tracking. We implement intelligent checkpointing and resumption capabilities for large full extractions.

Incremental Extraction: Delta-based extraction using timestamps, version numbers, or sequence IDs identifying changed records since last extraction. Dramatically reduces data movement and processing overhead for large datasets.

Change Data Capture (CDC): Real-time or near-real-time extraction leveraging database transaction logs, triggers, or native CDC capabilities. Provides minimal latency and source impact while capturing all data changes including updates and deletes.

API-Based Extraction: Integration with REST, GraphQL, or SOAP APIs including pagination handling, rate limiting management, authentication, and retry logic with exponential backoff.

File-Based Extraction: Processing structured files (CSV, JSON, XML, Parquet) from SFTP, S3, Azure Blob, or other file systems with comprehensive file pattern matching, archival, and error handling.

Database Replication: Logical or physical replication for relational databases providing low-latency data synchronization with minimal impact on source system performance.

DS STREAM implements comprehensive extraction monitoring including data quality checks at extraction, volume anomaly detection identifying unexpected changes, extraction performance tracking, and automated alerting for failures or issues. We design extraction processes as idempotent operations that can safely re-execute without causing data duplication or corruption, critical for reliable production operations.

Advanced Data Transformation Engineering

Data transformation embodies the business logic that converts raw source data into meaningful, analytics-ready information. DS STREAM implements sophisticated transformation logic supporting complex business rules, data enrichment, quality validation, and format standardization while ensuring maintainability, testability, and performance at scale.

Common transformation patterns we implement include data cleansing removing duplicates, correcting errors, and standardizing formats; data enrichment augmenting with reference data, lookups, and external data sources; data aggregation computing summaries, metrics, and KPIs at various granularities; data normalization decomposing into relational structures eliminating redundancy; data denormalization creating flat, query-optimized structures for analytics; data masking and anonymization protecting sensitive information while preserving analytical utility; and complex business calculations implementing domain-specific logic and derived metrics.

DS STREAM leverages diverse transformation frameworks including SQL-based transformations using dbt, Dataform, or native warehouse capabilities providing version control and testing; Spark-based processing for complex, computationally intensive transformations at scale; Python and Pandas for flexibility and integration with machine learning; cloud-native services including Google Cloud Dataflow, Azure Data Factory, and AWS Glue; and custom processing for specialized requirements. Framework selection is driven by transformation complexity, data volume, performance requirements, and team capabilities.

Transformation code quality and maintainability represent critical success factors for long-term operational sustainability. We implement transformation code as modular, reusable components with comprehensive unit testing; version control enabling audit trails and rollback capabilities; comprehensive documentation explaining business logic and data lineage; and code review processes ensuring quality and knowledge sharing across teams. Transformation logic is designed for incremental processing, processing only changed data rather than full reprocessing, dramatically improving performance and reducing computational costs.

Optimized Data Loading and Target Management

Data loading represents the final phase of ETL/ELT workflows, delivering transformed data to target systems including data warehouses, data lakes, operational databases, analytics platforms, and downstream applications. DS STREAM implements loading strategies optimized for target system capabilities, data freshness requirements, and operational constraints while ensuring data consistency, minimizing downtime, and maintaining performance.

Loading patterns we implement include full refresh completely replacing target data suitable for small datasets or when historical tracking is unnecessary; incremental append adding new records without modifying existing data supporting event streams and fact tables; incremental upsert (merge) inserting new records and updating changed records maintaining current state views; slowly changing dimensions (SCD) implementing Type 1 (overwrite), Type 2 (historical tracking with versioning), or Type 3 (limited history) patterns; and partition management efficiently updating specific partitions rather than entire tables, dramatically improving performance for large datasets.

DS STREAM optimizes loading operations through bulk loading APIs leveraging database-specific capabilities for maximum throughput, parallel loading distributing data across multiple threads or processes, intelligent batching balancing throughput with transaction overhead, and compression reducing network transfer and storage costs. We implement comprehensive error handling with transaction management ensuring atomic commits or rollbacks, dead letter queues capturing problematic records without stopping pipelines, and automated retry mechanisms with exponential backoff for transient failures.

Batch and Incremental Processing Architectures

Batch and incremental processing represent fundamental architectural patterns for data integration, each with specific use cases, advantages, and implementation considerations. DS STREAM designs processing architectures aligned with data freshness requirements, data volume characteristics, source system capabilities, and operational constraints.

Batch Processing

Batch processing executes ETL/ELT workflows on scheduled intervals—hourly, daily, weekly—processing accumulated data changes in single runs. Batch processing provides operational simplicity, efficient resource utilization through scheduled processing, and natural checkpoints for quality validation and recovery. DS STREAM implements batch processing for use cases including nightly financial reporting, daily sales analytics, weekly supply chain updates, and monthly performance aggregations where data freshness requirements align with batch schedules.

Our batch implementations include intelligent dependency management ensuring prerequisite jobs complete before dependent workflows, resource optimization scheduling computationally intensive jobs during off-peak hours, comprehensive monitoring tracking execution duration and identifying performance degradation, and automated recovery mechanisms restarting failed batches from checkpoints. We implement batch size optimization balancing processing efficiency with memory constraints and parallelization strategies leveraging distributed processing frameworks for large-volume batch operations.

Incremental Processing

Incremental processing identifies and processes only changed data since last execution, dramatically reducing processing overhead and enabling higher-frequency updates. DS STREAM implements incremental processing using watermarks tracking maximum processed timestamps or IDs, change data capture identifying database changes, file modification timestamps for file-based sources, and API pagination and filtering for SaaS integrations. Incremental processing enables near-real-time data availability while maintaining batch processing simplicity and operational characteristics.

Incremental processing challenges include managing late-arriving data that arrives after subsequent batches have processed, handling deletes and updates requiring lookback windows or CDC mechanisms, and maintaining state across executions requiring persistent watermark storage. DS STREAM implements sophisticated state management, late data handling strategies, and reconciliation processes ensuring data consistency despite incremental complexity. Our incremental implementations typically achieve 10-100x performance improvements over full processing while providing data freshness measured in minutes rather than hours or days.

Enterprise Data Integration Patterns and Best Practices

DS STREAM implements proven data integration patterns addressing common challenges including source system diversity, schema evolution, error handling, and performance optimization. Our pattern library represents accumulated best practices from hundreds of implementations across diverse industries and technical environments.

Key integration patterns include hub-and-spoke architectures centralizing integration logic while providing distributed data access, data virtualization providing unified views across disparate sources without physical integration, federation enabling distributed queries across multiple systems, and data mesh principles treating data as products with domain-oriented ownership. We implement standardized integration interfaces defining contracts between source and target systems, reducing coupling and enabling independent evolution.

Error handling patterns we implement include validation at boundaries catching errors early before propagation, quarantine mechanisms isolating problematic records for investigation without stopping pipelines, automated correction for known error patterns, and escalation workflows routing complex issues to data stewards. Performance optimization patterns include caching frequently accessed reference data, pre-aggregation computing common metrics during loading rather than at query time, and partitioning strategies aligning with query patterns for efficient data pruning.

ETL/ELT Performance Optimization and Scaling

ETL/ELT performance directly impacts data freshness, infrastructure costs, and scalability to growing data volumes. DS STREAM implements comprehensive performance optimization strategies addressing extraction efficiency, transformation logic optimization, loading throughput, and end-to-end pipeline orchestration. Our optimization engagements typically achieve 5-20x performance improvements while reducing infrastructure costs 30-50%.

Performance optimization techniques include parallel processing distributing workloads across multiple threads, processes, or compute nodes; incremental logic processing only changed data rather than full datasets; partition pruning limiting data scans to relevant subsets; predicate pushdown moving filtering logic to data sources reducing data movement; and columnar storage and compression optimizing I/O and storage efficiency. We implement comprehensive performance monitoring tracking execution duration, resource utilization, and data volumes processed, identifying optimization opportunities through systematic analysis.

Scaling strategies we implement include horizontal scaling adding compute resources to distribute workloads, vertical scaling increasing resources for specific bottlenecks, autoscaling dynamically adjusting resources based on workload demands, and workload isolation preventing interference between pipelines. DS STREAM designs ETL/ELT solutions that scale seamlessly from gigabytes to petabytes while maintaining consistent performance characteristics and operational simplicity.

Industry-Specific ETL/ELT Solutions

DS STREAM delivers specialized ETL/ELT solutions across FMCG, retail, e-commerce, healthcare, and telecommunications industries, each with unique integration challenges, data sources, and regulatory requirements.

Retail and E-Commerce Integration

Retail and e-commerce environments require integrating point-of-sale systems, e-commerce platforms, inventory management, customer data platforms, marketing automation, and supply chain systems. We implement high-frequency ETL/ELT supporting real-time inventory updates, customer behavior analytics, and dynamic pricing while handling seasonal volume spikes. Our solutions integrate with platforms including Shopify, Magento, SAP, Oracle Retail, and numerous point-of-sale systems.

Healthcare Data Integration

Healthcare ETL/ELT must integrate electronic health records, laboratory systems, medical imaging, billing systems, and claims processing while maintaining HIPAA compliance and data privacy. DS STREAM implements HL7 and FHIR integration standards, de-identification and anonymization transformations, and comprehensive audit logging. Our healthcare integrations support population health analytics, clinical decision support, and operational efficiency while ensuring patient privacy and regulatory compliance.

Telecommunications Data Integration

Telecommunications ETL/ELT processes massive volumes of network telemetry, call detail records, customer usage data, and billing information requiring extreme performance and scalability. We implement high-throughput batch and streaming integration supporting network analytics, customer behavior analysis, fraud detection, and revenue assurance processing billions of records daily with sophisticated aggregation and enrichment logic.

The DS STREAM Advantage in ETL/ELT Development

Choosing DS STREAM for ETL/ELT development initiatives provides organizations with comprehensive expertise, proven methodologies, and commitment to operational excellence.

Technical Depth: 150+ data engineers specializing in data integration, transformation logic, and performance optimization

Proven Experience: Over 10 years delivering mission-critical ETL/ELT solutions for enterprise clients across industries

Technology Breadth: Expertise across cloud-native integration services, Apache Spark, Airflow, dbt, custom frameworks, and legacy ETL tools

Technology Agnostic: Recommendations driven by your specific requirements and strategic direction rather than tool preferences

Cloud Expertise: Deep knowledge of Google Cloud, Microsoft Azure, and AWS integration services and best practices

Industry Specialization: Domain expertise in FMCG, retail, e-commerce, healthcare, and telecommunications integration challenges

Quality Focus: Comprehensive testing, validation, and quality assurance ensuring reliable production operations

Performance Excellence: Proven optimization methodologies achieving dramatic performance improvements and cost reduction