Establishing Responsible, Compliant, and Risk-Managed AI Systems for Enterprise Scale

As artificial intelligence and machine learning systems increasingly drive critical business decisions and customer interactions, organizations face mounting pressure from regulators, stakeholders, and society to ensure these systems operate responsibly, transparently, and fairly. The consequences of ungoverned AI systems extend beyond reputational damage to include regulatory penalties, legal liability, financial losses, and erosion of customer trust. High-profile AI failures—discriminatory lending decisions, biased hiring algorithms, privacy violations, and unexplainable model predictions affecting human lives—demonstrate that technical excellence alone is insufficient. Organizations require comprehensive governance frameworks, robust compliance processes, and systematic risk management practices embedded throughout the ML lifecycle.

DS STREAM delivers comprehensive AI governance, compliance, and model risk management solutions that enable organizations to pursue AI innovation with confidence while meeting regulatory requirements and managing inherent risks. Our 150+ specialists bring over 10 years of experience implementing governance frameworks across heavily regulated industries including healthcare, financial services, and telecommunications—sectors where model failures have serious consequences and regulatory oversight is stringent. Our technology-agnostic approach, combined with partnerships with Google Cloud, Microsoft Azure, and Databricks, ensures governance solutions integrate seamlessly with existing infrastructure while implementing industry best practices for model explainability, fairness assessment, regulatory compliance, risk quantification, audit trails, and ethical AI development.

The Imperative for AI Governance and Risk Management

Organizations deploying AI systems operate in an evolving landscape of regulatory requirements, ethical expectations, and risk exposures that demand proactive governance rather than reactive compliance. Regulatory frameworks worldwide increasingly mandate transparency, explainability, fairness testing, and human oversight for AI systems affecting consequential decisions. The European Union's AI Act establishes risk-based regulatory requirements for high-risk AI systems. The United States is developing sector-specific AI regulations alongside existing frameworks like Equal Credit Opportunity Act and Fair Housing Act applied to algorithmic decisions. Healthcare AI faces FDA oversight for clinical decision support systems. Financial services confront model risk management requirements from banking regulators.

Beyond regulatory compliance, organizations face operational risks from AI systems including model performance degradation causing poor business decisions, discriminatory predictions creating legal liability and reputational damage, unexplainable model outputs undermining stakeholder trust, data privacy violations resulting in regulatory penalties, and security vulnerabilities enabling adversarial attacks. These risks intensify as AI adoption scales, with model failures in critical systems potentially causing significant financial and operational impact.

Effective governance transforms these challenges into competitive advantages. Organizations with robust governance frameworks move faster because systematic risk management enables confident deployment rather than cautious inaction. Clear governance enables delegation of AI decision-making to appropriate teams with confidence that appropriate controls exist. Comprehensive documentation and audit trails accelerate regulatory reviews and audits. Fairness and explainability capabilities build stakeholder trust and enable adoption of AI recommendations. DS STREAM's governance solutions balance innovation velocity with appropriate risk management, enabling responsible AI at scale.

AI Governance Framework Design and Implementation

Comprehensive AI governance requires organizational structures, policies, processes, and technologies working together to ensure responsible AI development and deployment. DS STREAM implements governance frameworks tailored to organizational context, risk tolerance, and regulatory requirements.

Governance Organizational Structure

Effective governance requires clear roles and responsibilities spanning business leadership, technical teams, risk management, legal, and compliance functions. DS STREAM helps organizations establish AI governance structures including executive AI governance committees providing strategic oversight and policy direction, AI ethics boards reviewing high-risk AI applications, model risk management teams assessing model risks and control effectiveness, compliance functions ensuring regulatory adherence, and technical governance teams implementing controls and monitoring. We define clear decision rights, escalation paths, and interaction patterns ensuring governance operates efficiently without becoming bureaucratic bottleneck.

Policy and Standards Development

Governance policies establish principles and requirements guiding AI development and deployment. DS STREAM develops comprehensive policy frameworks covering AI ethics principles defining organizational values and commitments, model risk management policies establishing risk assessment and control requirements, data governance policies ensuring appropriate data usage and privacy protection, model development standards defining technical requirements for robustness, fairness, and explainability, deployment approval processes gating production releases, and incident response procedures handling model failures or ethical concerns. These policies balance necessary rigor with practical implementability, avoiding governance theater that creates paperwork without meaningful risk reduction.

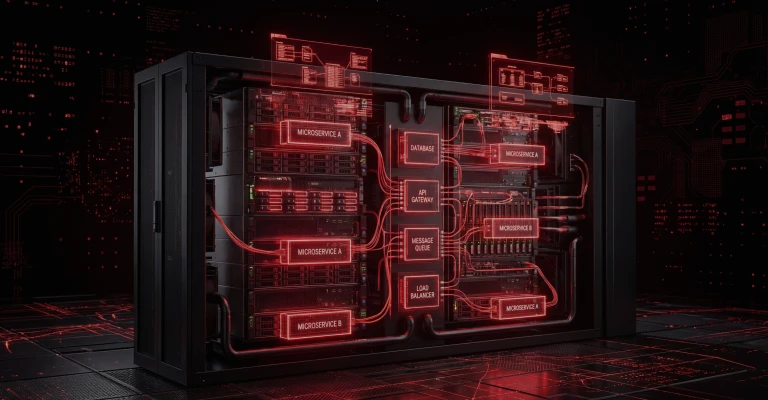

Model Inventory and Lifecycle Management

Organizations cannot govern models they don't know exist. Comprehensive model inventories catalog all AI/ML systems with metadata including use case and business purpose, model type and algorithms, data sources and features, deployment status and environment, model owner and stakeholders, risk classification, and approval status. DS STREAM implements model registry systems providing this inventory with lifecycle tracking from development through retirement. Registry integration with development workflows ensures models are cataloged automatically rather than requiring manual registration, improving compliance while reducing burden on development teams.

Risk-Based Governance Approach

Governance rigor should align with risk exposure—high-risk models affecting human welfare, financial decisions, or legal compliance warrant stringent controls while low-risk models supporting internal operations require lighter governance. DS STREAM implements risk-based frameworks classifying models by impact and prescribing appropriate controls. High-risk models undergo comprehensive review, extensive testing, senior approval, and ongoing monitoring. Medium-risk models face moderate requirements. Low-risk models follow lightweight processes. This risk-based approach focuses governance resources on areas of greatest concern while enabling rapid iteration on lower-risk applications.

Model Explainability and Interpretability

Explainable AI capabilities enable stakeholders to understand why models make specific predictions, building trust and enabling effective oversight. Explainability is increasingly mandated by regulations requiring algorithmic transparency for consequential decisions. DS STREAM implements comprehensive explainability solutions spanning model types and use cases.

Intrinsically Interpretable Models

Linear models, decision trees, and rule-based systems provide inherent interpretability where relationships between features and predictions are transparent. For use cases where explainability is paramount, DS STREAM recommends intrinsically interpretable models when they achieve acceptable performance. We implement techniques enhancing interpretable model performance including feature engineering creating meaningful predictive features, regularization preventing overfitting while maintaining interpretability, and ensemble methods like rule ensembles combining multiple interpretable models. These approaches often achieve performance approaching complex models while maintaining transparency.

Post-Hoc Explainability for Complex Models

When model complexity is necessary for performance requirements, post-hoc explainability techniques provide transparency into black-box models. DS STREAM implements SHAP (SHapley Additive exPlanations) providing theoretically grounded feature attribution, LIME (Local Interpretable Model-agnostic Explanations) explaining individual predictions through local approximation, integrated gradients for deep learning models, attention mechanisms making neural network focus areas explicit, and counterfactual explanations showing what input changes would alter predictions. These techniques generate explanations for individual predictions, supporting human review of specific decisions, and aggregate explanations revealing overall model behavior patterns.

Explanation User Interfaces and Integration

Explainability capabilities deliver value only when accessible to appropriate stakeholders through intuitive interfaces. DS STREAM implements explanation user interfaces tailored to different audiences including data scientists receiving detailed technical explanations, business users seeing simplified feature importance and decision factors, customers receiving user-friendly explanations for decisions affecting them, and regulators accessing comprehensive documentation and aggregate model behavior analysis. Explanation integration with application systems enables real-time explanation delivery alongside predictions, supporting human-in-the-loop workflows where users review and validate model recommendations.

Fairness Assessment and Bias Mitigation

AI systems can perpetuate or amplify societal biases present in training data, leading to discriminatory outcomes. Fairness assessment and bias mitigation capabilities are ethical imperatives and increasingly regulatory requirements. DS STREAM implements comprehensive fairness solutions addressing bias throughout the ML lifecycle.

Fairness Metrics and Assessment

Fairness assessment begins with defining appropriate fairness criteria—demographic parity ensuring equal prediction rates across groups, equalized odds ensuring equal true positive and false positive rates, equal opportunity ensuring equal true positive rates, or predictive parity ensuring equal positive predictive values. Different use cases warrant different fairness definitions based on legal requirements and ethical considerations. DS STREAM works with legal, compliance, and business stakeholders to define appropriate fairness criteria, then implements automated fairness testing measuring model performance across protected groups. Assessment identifies disparate impact requiring remediation before production deployment.

Bias Mitigation Techniques

Bias mitigation addresses unfairness through pre-processing techniques modifying training data to reduce bias, in-processing techniques adding fairness constraints to model training, and post-processing techniques adjusting predictions to achieve fairness criteria. DS STREAM implements appropriate mitigation strategies balancing fairness improvements with performance impact. Techniques include reweighting training examples, adversarial debiasing, fairness constraints in objective functions, and threshold optimization. Mitigation strategies are validated through comprehensive testing ensuring bias reduction without unacceptable performance degradation. Documentation of fairness assessment and mitigation provides evidence of due diligence for regulatory and legal purposes.

Ongoing Fairness Monitoring

Fairness is not a one-time assessment but requires ongoing monitoring as model performance and population distributions evolve. DS STREAM integrates fairness monitoring with model monitoring infrastructure, continuously calculating fairness metrics across protected groups and alerting when disparate impact emerges. This proactive monitoring enables rapid response to fairness degradation before significant impact occurs, supporting responsible AI operations at scale.

Regulatory Compliance Frameworks and Implementation

AI systems increasingly face sector-specific and jurisdictional regulations requiring compliance capabilities embedded throughout ML workflows. DS STREAM's experience in regulated industries informs comprehensive compliance solutions.

Healthcare AI Compliance: FDA and HIPAA

Healthcare AI systems face FDA oversight when functioning as medical devices or clinical decision support systems. DS STREAM implements FDA compliance capabilities including comprehensive documentation of model development and validation, software development lifecycle controls meeting quality system regulations, clinical validation demonstrating safety and effectiveness, post-market surveillance monitoring deployed system performance, and change control processes for model updates. HIPAA compliance requires data privacy controls, access restrictions, audit logging, and breach notification capabilities integrated throughout ML pipelines. Our healthcare AI solutions address both FDA and HIPAA requirements, enabling compliant clinical AI deployment.

Financial Services: Model Risk Management and Fair Lending

Financial services AI faces regulatory oversight from banking regulators implementing model risk management frameworks requiring comprehensive model documentation, independent validation, ongoing monitoring, and governance processes. DS STREAM implements MRM-compliant frameworks addressing SR 11-7 and similar guidance with model development documentation, comprehensive validation testing, limitation and assumption documentation, and ongoing performance monitoring. Fair lending regulations including Equal Credit Opportunity Act and Fair Housing Act prohibit discriminatory lending practices, requiring fairness testing for credit models. We implement fair lending compliance with disparate impact testing, explainability for adverse action notices, and model documentation supporting regulatory examinations.

EU AI Act and GDPR Compliance

European operations face the EU AI Act establishing risk-based regulatory requirements for AI systems and GDPR governing data usage and privacy. DS STREAM implements EU AI Act compliance for high-risk AI systems with conformity assessment processes, comprehensive technical documentation, risk management systems, data governance, transparency requirements, and human oversight mechanisms. GDPR compliance requires lawful basis for data processing, data minimization, right to explanation for automated decisions, and data subject rights implementation. Our solutions address both regulatory frameworks, enabling compliant AI deployment in European markets.

Industry-Specific Regulatory Requirements

Beyond horizontal regulations, industry-specific requirements affect AI systems. Telecommunications faces requirements around customer data privacy and network reliability. FMCG and retail encounter consumer protection regulations. Our extensive industry experience enables tailored compliance solutions addressing specific regulatory landscapes, ensuring AI systems meet applicable requirements while supporting business objectives.

Model Risk Quantification and Management

Model risk—the potential for adverse consequences from decisions based on incorrect or misused model outputs—requires systematic identification, quantification, and mitigation. DS STREAM implements comprehensive model risk management frameworks.

Model Risk Assessment Frameworks

Risk assessment evaluates models across multiple dimensions including model complexity and interpretability, data quality and representativeness, development process rigor, validation comprehensiveness, deployment context and usage, and potential impact of incorrect predictions. DS STREAM implements structured risk assessment frameworks scoring models across these dimensions, generating risk ratings guiding governance requirements. High-risk models undergo extensive controls while lower-risk models follow streamlined processes. Risk assessments occur at initial deployment and periodically thereafter, adjusting as usage or context changes.

Model Validation and Independent Review

Independent model validation provides objective assessment of model quality, appropriateness, and limitations. Validation encompasses conceptual soundness review assessing model design and methodology, implementation verification ensuring correct code execution, performance testing across diverse scenarios, outcome analysis comparing predictions to actuals, limitation and assumption documentation, and alternative model comparison. DS STREAM provides independent validation services or establishes internal validation capabilities, ensuring models undergo rigorous assessment before production deployment and periodically thereafter.

Model Limitation and Assumption Documentation

All models operate under assumptions and limitations that constrain appropriate usage. Comprehensive documentation captures data assumptions and quality dependencies, applicable scope and out-of-scope scenarios, known limitations and edge cases, performance characteristics under various conditions, and update frequency requirements. This documentation guides appropriate model usage, prevents misapplication, and supports governance decisions. DS STREAM implements automated documentation generation where possible, reducing manual burden while ensuring completeness.

Model Risk Mitigation Controls

Risk mitigation implements controls proportional to risk exposure including approval workflows requiring senior review for high-risk models, human-in-the-loop patterns requiring human validation of high-stakes predictions, prediction confidence thresholds routing low-confidence predictions for review, challenger models providing independent second opinions, and override mechanisms enabling human judgment when necessary. Control effectiveness is monitored and validated, ensuring mitigation strategies function as designed.

Audit Trails and Documentation Management

Comprehensive audit trails and documentation provide evidence of responsible AI practices, support regulatory examinations, and enable organizational learning. DS STREAM implements documentation systems capturing complete ML lifecycle history.

Automated Audit Trail Generation

Manual documentation creation is burdensome and error-prone. DS STREAM implements automated audit trail generation capturing data lineage connecting models to source data, code versioning tracking all development changes, experiment history documenting model development iterations, approval records capturing governance decisions, deployment history tracking production releases, and monitoring data recording model performance over time. Automation ensures comprehensive documentation without manual overhead, improving compliance while reducing burden on development teams.

Model Documentation Standards

Standardized model documentation templates ensure consistent, comprehensive documentation across models. DS STREAM implements model cards documenting intended use, training data, performance characteristics, limitations, and ethical considerations. Documentation generation integrates with development workflows, auto-populating templates with available metadata and prompting data scientists for required information. Centralized documentation repositories provide searchable access to stakeholders requiring model information—governance teams, validators, auditors, or business users.

Regulatory Reporting and Examination Support

Regulatory examinations require comprehensive documentation and evidence of governance practices. DS STREAM's documentation systems facilitate regulatory reporting with audit trail export capabilities, comprehensive model inventories, evidence of fairness testing and bias mitigation, validation documentation and reports, and governance meeting records and decisions. Well-organized documentation accelerates examinations and demonstrates organizational commitment to responsible AI, reducing regulatory concerns and potential enforcement actions.

Data Governance for AI Systems

AI systems depend on high-quality, appropriately governed data. Data governance for AI addresses data quality, privacy, security, and ethical usage.

Data Quality Management

Model quality depends fundamentally on training data quality. Data governance establishes data quality standards, implements automated quality monitoring, provides data quality dashboards, and triggers data remediation workflows when quality degrades. DS STREAM integrates data quality management with model governance, ensuring models only train on data meeting quality standards. Data quality issues trigger model revalidation, preventing quality degradation from propagating to model predictions.

Privacy-Preserving ML Techniques

Privacy regulations and ethical considerations require minimizing exposure of sensitive personal information. DS STREAM implements privacy-preserving ML techniques including differential privacy adding noise preventing individual record identification, federated learning training models across distributed data without centralization, synthetic data generation creating realistic training data without exposing real records, and secure multi-party computation enabling collaborative learning without data sharing. These techniques enable ML development while protecting individual privacy, addressing both regulatory requirements and ethical obligations.

Data Lineage and Provenance Tracking

Understanding data origins and transformations is essential for governance and debugging. DS STREAM implements comprehensive data lineage tracking connecting models to source data systems, documenting all transformations and aggregations, tracking data version and collection timeframes, and recording data access and usage. This lineage supports governance reviews, enables impact analysis when source data changes, and facilitates debugging when data quality issues emerge. Lineage visualization provides intuitive understanding of complex data dependencies across ML systems.

Ethical AI Principles and Implementation

Beyond regulatory compliance, organizations increasingly commit to ethical AI principles guiding responsible development. DS STREAM helps organizations translate ethical principles into operational practices.

Ethical Principle Development

Organizations develop AI ethics principles reflecting values and commitments to stakeholders. Common principles include fairness and non-discrimination, transparency and explainability, privacy and data protection, accountability and human oversight, safety and robustness, and beneficial purpose. DS STREAM facilitates principle development through stakeholder engagement, benchmarking against industry practices, and legal and regulatory alignment. Principles should be specific enough to guide decisions while remaining flexible across diverse use cases.

Operationalizing Ethical Principles

Principles deliver value only when translated to operational practices. DS STREAM implements principle operationalization through technical requirements translating principles to testable criteria, review processes assessing principle adherence, training programs building awareness and capability, and metrics tracking principle implementation effectiveness. For example, fairness principles translate to fairness testing requirements, transparency principles to explainability capabilities, and accountability principles to approval workflows. This operationalization ensures principles influence actual AI development rather than remaining aspirational statements.

Ethics Review Boards and Processes

Ethics review boards provide governance oversight for AI systems raising ethical concerns beyond routine governance processes. DS STREAM helps establish ethics boards with diverse membership spanning technical, legal, ethical, and business perspectives. Boards review high-risk AI applications, novel use cases, or systems raising stakeholder concerns. Review processes balance rigor with efficiency, providing thoughtful oversight without becoming deployment bottlenecks. Board recommendations inform development teams, governance committees, and executive leadership, ensuring ethical considerations receive appropriate weight in AI deployment decisions.

Incident Response and Issue Management

Despite best efforts, AI systems may experience failures, fairness issues, or unintended consequences requiring systematic response. DS STREAM implements incident response capabilities for AI systems.

AI Incident Detection and Reporting

Incident detection combines automated monitoring alerting on model performance degradation, fairness metric violations, or data quality issues with stakeholder reporting channels enabling users, customers, or employees to report concerns. DS STREAM implements incident reporting systems with clear escalation paths, initial triage processes, and routing to appropriate response teams. Incident categorization by severity guides response urgency and stakeholder notification requirements.

Incident Investigation and Root Cause Analysis

Incidents require thorough investigation determining root causes and appropriate remediation. Investigation processes examine model behavior and predictions, data quality and distribution, deployment and infrastructure issues, usage patterns and user interactions, and external factors affecting model environment. DS STREAM implements investigation frameworks with defined timelines, responsible parties, and documentation requirements ensuring thorough, consistent incident analysis. Root cause analysis distinguishes symptoms from underlying causes, enabling effective remediation rather than superficial fixes.

Remediation and Continuous Improvement

Incident findings drive remediation including immediate fixes addressing acute issues, model updates correcting underlying problems, process improvements preventing recurrence, and stakeholder communication providing transparency. DS STREAM implements remediation tracking ensuring incidents resolve completely rather than languishing unaddressed. Incident data feeds continuous improvement, identifying patterns across incidents and informing governance enhancements. Organizations learn from incidents, progressively strengthening AI risk management through experience.