From IVRs to AI Voice Agents

For years, “automation” in customer service meant rigid IVR trees and simple voicebots that could barely handle anything beyond “press 1 for sales, 2 for support.” A McKinsey‑cited survey (1) found that seven out of ten companies have IVR systems that contain only 30% or fewer calls, meaning most customers still end up needing a human after navigating menus. At the same time, more than half of customers say they have abandoned a business because they reached an automated menu instead of a person, and 61% feel that IVR makes for a poor customer experience.

Modern conversational AI has changed that baseline. Today’s voice agents can hold low‑latency, human‑like, multi‑turn conversations, recognize intent, pick up sentiment, and interact with back‑end systems in real time. In an academic study of 50,000 real support calls, adding a voice AI assistant reduced average handle time by 20–40% and automatically resolved between roughly a third and more than four‑fifths of calls, depending on the use case. The potential is clear, but the quality of the experience still depends entirely on how these agents are designed, governed and integrated (2).

Why Full Automation Is the Destination, Not the Starting Point

In customer service, “full automation” is often presented as a switch you flip. In reality, it is closer to a destination you reach step by step. AI voice agents may eventually handle the vast majority of routine conversations end‑to‑end, but they only get there after spending time in the real world, exposed to messy edge cases, ambiguous questions and changing policies.

Early deployments are usually a shared effort between machines and people. The agent takes the first line of simple, repetitive work, checking order status, confirming bookings, updating contact details, while humans handle situations that are emotionally charged, legally sensitive or poorly structured. This coexistence is not a sign of immaturity, it is how the system learns where it is genuinely reliable and where human judgment is still essential. Forrester (3) expects that by 2026, one in four brands will see at least a 10% increase in successful simple self‑service interactions as intelligent chat and voice agents take over more of this front line, but emphasises that maturity will vary by use case and organization.

Over time, teams study what actually happens on calls: where the agent hesitates, where customers ask for a person, which intents repeatedly trigger hand‑offs. They tighten guardrails, refine conversation flows and adjust escalation rules. Gradually, more scenarios move from “AI supports a human” to “AI leads, human is available when needed.” Full automation on selected lines then becomes an informed choice, backed by data and experience, rather than a risky leap of faith.

Thinking about automation as a journey rather than a binary goal changes the design mindset. The question stops being “Can we remove humans from this process?” and becomes “Where does automation genuinely improve experience, and where does it still need a human partner?” That perspective leads to systems that are not only more efficient, but also more trustworthy for the people who depend on them.

Human in the Loop: Safety Net for Sensitive Use Cases

Human in the loop is not just a fallback; it is the safety net that makes AI voice agents viable in sensitive environments. In domains like healthcare, HR or financial services, the cost of a wrong answer is measured not only in bad CX, but in real harm, regulatory exposure or broken trust. That is why mature deployments draw a clear line between what the agent is allowed to do automatically and what must be escalated. The voice agent can handle appointment booking, reminders, basic status checks or FAQs on its own, but it is explicitly designed to hand off complex diagnoses, emotionally charged conversations or edge‑case disputes to trained staff.

Crucially, this handoff is not a drop‑out, but a continuation of the same interaction. When the AI detects uncertainty, risk signals or specific intents, it passes the call with transcript, summary and key context to a human so the person does not have to repeat their story from scratch. This directly addresses a common source of frustration in self‑service: recent research highlights that customers are especially dissatisfied when they cannot move smoothly from automated systems to live agents without starting over. In practice, humans remain responsible for judgment calls, empathy and exceptions, while the agent takes care of structured, repetitive work. The result is not “AI versus humans,” but a layered system in which automation does the heavy lifting and people stay in control of what truly matters (4).

Learning From Every Call: The Power of Feedback Loops

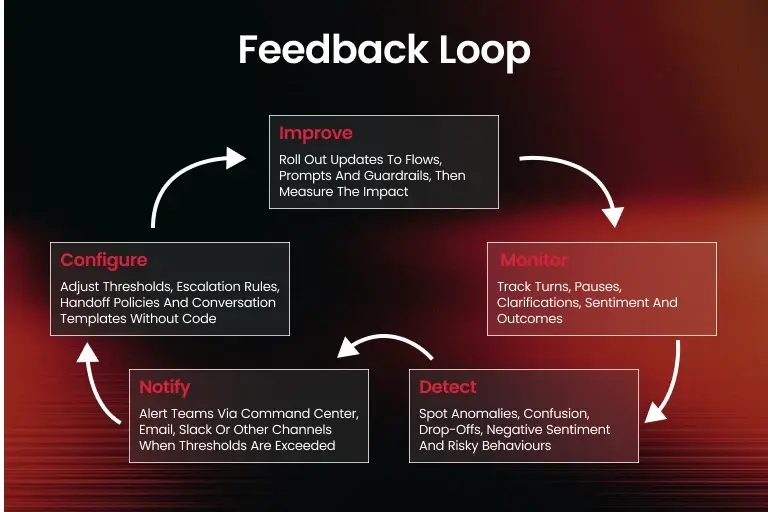

Great AI voice agents are not defined only by the model behind them, but by the feedback loops wrapped around their everyday use. Two systems can use similar speech recognition and large language models. One gradually becomes more accurate, helpful and trustworthy, while the other stagnates or quietly degrades. The difference is whether each call is treated as a one‑off event, or as data that feeds back into design, policy and engineering decisions. In a mature setup, teams continuously analyze transcripts, sentiment, drop‑offs and escalation patterns to understand where conversations flow naturally and where users get stuck or frustrated.

These loops usually follow a simple rhythm: monitor, detect, adjust, repeat. Operations and product teams watch how real callers phrase their questions, which intents are misclassified, when people ask for a human, and where compliance or safety rules are triggered. They then refine prompts, tweak guardrails, update knowledge bases and adjust escalation thresholds, pushing changes through proper review and deployment processes instead of ad‑hoc fixes. Over weeks and months, this steady tuning is what separates a mediocre agent that “mostly works” from one that feels increasingly aligned with the organisation’s policies, language and customers. Without feedback loops, even the best AI slowly drifts out of sync with reality and with them it becomes a living system that learns from every interaction.

Turning Every Call Into a Data Stream

Live telemetry is what turns AI voice agents from clever add‑ons into part of the operational nervous system of a company. Instead of treating calls as moments that disappear once they end, a telemetry‑first approach captures what happens in real time: how long calls take, which intents appear, when people drop off, how often the agent hesitates or escalates, and what outcomes are reached. This stream of metrics, logs and traces makes performance visible not only to engineers, but also to operations and CX teams who need to understand what the system is actually doing on busy Monday mornings or during seasonal peaks.

Viewed this way, every conversation becomes structured data. Dashboards and command centers can show live queues, error spikes, unusual patterns in sentiment, or a sudden rise in calls about a particular topic. When something looks off latency creeping up, a new flow causing confusion, a guardrail firing too often then teams can intervene quickly, adjust configuration or temporarily route more calls to humans. Over time, the same telemetry underpins more strategic decisions: which use cases are ready for deeper automation, where scripts or knowledge need updating, and how changes to policies or products ripple through customer behavior. Live monitoring is not just about keeping the lights on, it is the foundation for treating voice agents as measurable, improvable parts of an enterprise‑grade customer experience.

Omnichannel CX: Voice Agents as One Piece of the Puzzle

Omnichannel customer experience is built on the idea that people do not think in “channels”, but instead, they just expect to pick up a conversation wherever they left off. A customer might first see an offer in an app, ask a question over chat, get a follow‑up call, and then confirm details by email - it is a single, continuous interaction. In that context, AI voice agents are one important piece of a larger puzzle, not a standalone solution. They need to share the same knowledge, policies and history as chatbots, human agents and self‑service portals, so the story stays consistent no matter how the customer reaches out.

Practically, this means treating the voice agent as another interface on top of a common “brain” and data layer. The intent models, business rules and guardrails used in chat or messaging should align with those used on the phone, and context from one channel should be available in the others, so a complaint started in chat can be picked up on a call without anyone having to repeat themselves. Yet in Deloitte’s Global Contact Center Survey (5), only 7% of contact centers said they could seamlessly transition customers between channels while preserving interaction data and context, which shows how much room there is for improvement. When this works, voice becomes a natural entry point or escalation path within a wider journey and an option for those who prefer to talk, for complex issues that are hard to type, or for moments when speed and reassurance matter most. Omnichannel CX is not about replacing humans or forcing customers into automation, it is about orchestrating people, AI agents and channels so that every interaction feels coherent, regardless of where it starts (6).

From Retail to Healthcare: How This Plays Out in Practice

In retail, CPG/FMCG, hospitality and healthcare, the impact of AI voice agents shows up in the everyday frictions that customers and patients already feel.

In retail and consumer goods:

- many calls revolve around order tracking,

- delivery changes,

- product availability

- loyalty points.

In hospitality they concern:

- bookings,

- check‑in times,

- in‑room requests

- directions

In healthcare, they often focus on:

- appointment scheduling,

- reminders,

- prescription refills

- basic triage questions.

In all these cases, voice agents can take the first line of contact, give instant answers based on live systems and clear rules, and escalate more complex, emotionally charged or safety‑critical situations, such as medical concerns or serious complaints to human staff.

Over time, this reduces queues at peak moments, frees human agents and clinicians for nuanced work, and gives organizations a more consistent way to apply policies across locations, campaigns and channels. The academic study mentioned earlier showed that call‑centre teams could handle the same or greater volume with a significantly lower average handle time once a voice assistant was introduced (7).

Conclusion: A Great AI Voice Agent Starts With Deep Customer Understanding

A great AI voice agent starts with understanding the people on the other side of the line. It is tempting to think first about models, latency and telephony integrations and those matter, but they only create value when they are pointed at real problems customers and patients actually have. The most effective systems are built from the outside by listening carefully to call recordings, mapping journeys across channels, and learning how different groups express urgency, confusion or vulnerability in their own words.

Once that understanding is in place, technology becomes a way to encode and scale it rather than to replace it. Conversation flows, guardrails and escalation rules can be designed to reflect how a specific organization wants to show up in retail, hospitality or healthcare, not just how a generic bot might respond. Telemetry, feedback loops and human‑in‑the‑loop handoffs then serve a clear purpose: they reveal where the system is aligned with real needs and where it drifts away from them. In that sense, AI voice agents are not a shortcut around customer understanding, but they are a stress test of it.

The better you know your customers, and the people who serve them today, the more naturally your agents will fit into existing processes and the more invisible the technology will feel.